Our First

Artificial Human

Artificial Human

NEON is a computationally

created

virtual being that looks

and behaves like us.

NEON is a computationally

created

virtual being that looks

and behaves like us.

Not an AI assistant. Not your 3D

avatar.

Simply a real friend.

Your next teammate.

Helping you

serve your customers, better.

From Spanish to Japanese,

from finance to music, NEON speaks it all.

Anywhere, anytime.

Our collaborators and friends.

Your next yoga instructor. A financial advisor. The K-pop star.

And a friend who knows you. The world with NEON is about limitless possibilities. It is about that face-to-face, human connection.

*NEON Frame, NEON WorkForce

*NEON View, NEON Frame

*NEON View

*NEON View, NEON Frame

*NEON View, NEON Frame

Inspired by nature. Created by science.

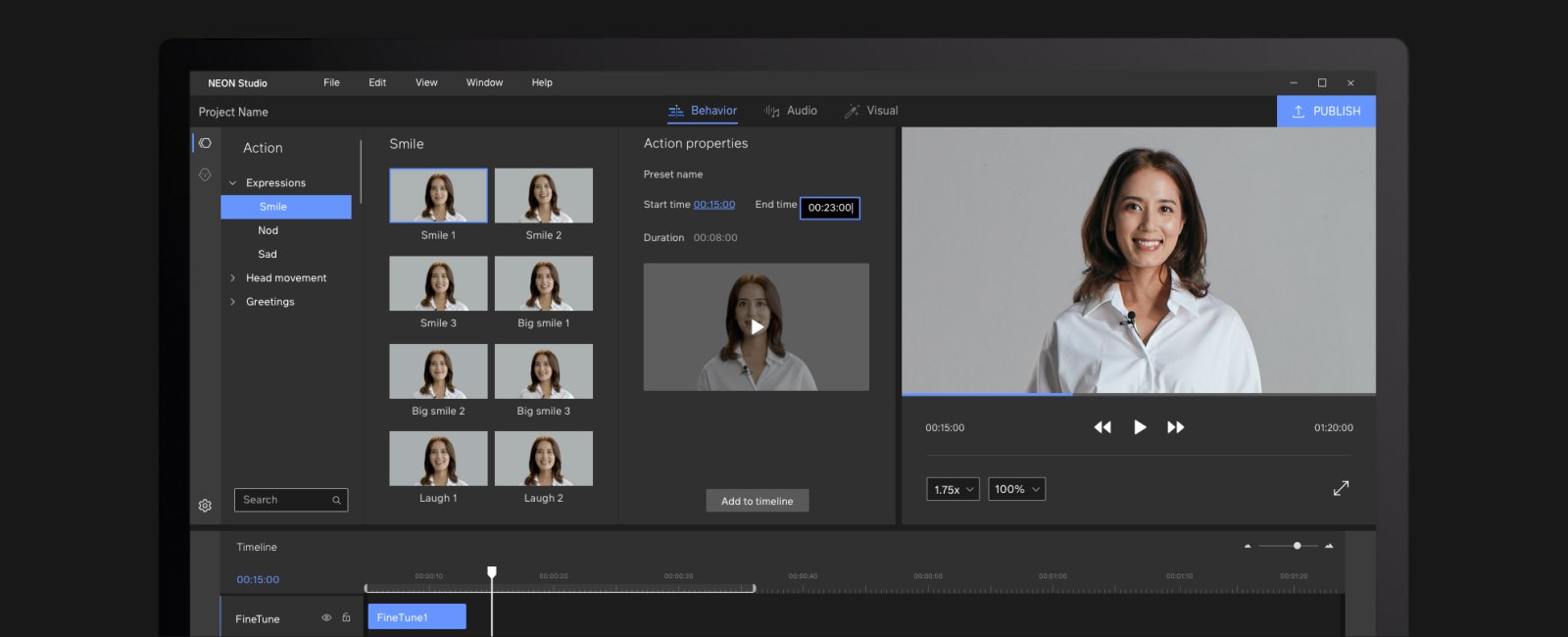

Pioneering the domains of Behavioral Neural Networks,

Evolutionary Generative Intelligence, and Computational Reality.

Coming 2022.

JOBS

Interested in Joining NEON? Let’s Talk.

Learn moreNEWSROOM

Latest news, blog, and updates.

Learn more